Setting and measuring outcomes

A guide to identifying, defining and measuring outcomes for impact bonds and other outcomes-based contracts

Overview

28 minute read

In an outcomes-based contract, the achievement of specified outcomes determines how much the providers and/or investors will be paid. As a result, setting appropriate outcomes and measuring them effectively is key to designing and managing these contracts.

Outcomes – what should change as a result of the intervention

The primary outcome is the single most important outcome of the contract. The secondary outcomes include other related outcomes the payer would like to see achieved.

Outcomes may also be divided into hard and soft outcomes. A hard outcome can be objectively and independently measured. A soft outcome depends on subjective measurement, such as an individual’s self-assessment.

Proxy outcomes are strongly correlated to desired outcomes. They may provide an indirect measure of the desired outcome when direct measures are unobservable/unavailable.

Lead/progression outcomes represent steps towards a later outcome, which may be necessary if the primary outcome will take a long time to measure.

Outcome measures – for each outcome, what will show whether or not it has been achieved

Different types of data can be used to assess whether or not a particular outcome has been achieved. Primary data is collected specifically to answer questions about the outcomes detailed in the contract. Administrative data or secondary data is already collected for other purposes.

Measurement may also take place at different levels. Cohort measures assess the overall outcomes of the target group, usually by comparison to another group who were not part of the scheme. Individual measurement assesses whether each individual in the target group has achieved specified outcomes.

When an outcome target is hit, the provider/investor will be paid, and so targets must be achievable for the contract to work.

Attainable stretch targets may also serve to extend the scope of the contract beyond the specified outcomes.

In order to ensure targets are reasonable, contractors should seek to involve all stakeholders in their design process.

Ensuring desired outcomes are achieved

When selecting outcome measures and targets, it is important to guard against perverse incentives. These may include ‘cherry picking’ who is included in the cohort, or ‘creaming’ and ‘parking’ within the cohort, to target those most likely to achieve the specified outcomes.

When choosing metrics, it is also important to attempt to mitigate the impacts of external factors on the outcome measures. These may include changes to government policy, planned service changes, and changes in local practice.

By definition, setting outcomes and measuring them effectively is one of the most important aspects of an outcomes-based contract. For impact bonds and other payment-by-results contracts, the achievement of outcomes will determine how much is paid by the outcome payers to the providers and/or investors. The way outcomes are defined directly affects whether the outcome payers achieve value for money from the contract.

When it comes to setting outcomes, there is no ‘correct’ or ‘set’ way to define outcomes, nor are there universally agreed definitions around many of the core concepts. You should refer to our Glossary for clarity around the key terms used across this guide.

In practice, the process of identifying suitable outcomes and agreeing metrics and targets for measuring them is not a linear one; it usually requires an iterative process and sustained engagement with all the project stakeholders, including those who will be supported through the project or intervention being developed.

Why measure outcomes?

Setting and measuring outcomes is crucial for determining the payments made under an outcomes-based contract. However, there are many other reasons why organisations might seek to focus on outcomes, rather than on inputs or activities. Even traditional fee-for-service contracts and grants can be outcomes-focused when you set outcomes upfront and measure the achievement of them.

Before working out how to set and measure outcomes, it is worth pausing to articulate why you want to do this. There could be many reasons, including a better understanding of the wider impact of a service, enhancing transparency, public accountability and citizen engagement, and a way to encourage collaboration across multiple organisations.

In particular, we think it is important to measure outcomes for three core reasons:

1. To manage performance or learn how to get better

Managing performance and learning how to get better are two sides of the same coin, partly depending on whether you are an outcome payer or provider, as well as the type of relationship you set up in your outcomes-based contract.

The overarching point is that one very good reason to set and measure outcomes is formative. You will use the information to indicate whether a project is moving closer to its ultimate objective or not. You will make decisions based on the information. For example, you might offer some advice to a provider, or offer your team a certain type of training.

In the Educate Girls Development Impact Bond in India there was a strong focus on performance management and data analysis. This enabled the provider organisation to identify early on areas of improvement and adjust programme delivery accordingly. This led to the programme achieving a particularly high increase in test scores in Year 3: in the final year, learning levels for students in programme schools grew 73% more than their peers in other schools. You can read more in the 2018 IDinsight final evaluation report.

Setting and measuring outcomes gives you more information to help you are make the right decisions so you can move towards your ultimate objective. It's not enough information on its own as you will want qualitative information as well, to add colour to the numbers. A good combination is best.

2. To evaluate whether something works (but not why it works – or doesn’t)

Measuring outcomes is a very important part of the process of evaluation, which we cover in a separate guide; see our guide on evaluating an outcomes-based contract. Some evaluations can be formative as they help with learning how to do better. However, this point refers to the summative evaluation which comes at the end and gives you an idea of how effective it was.

An impact evaluation is the most robust and uses quantitative methods (statistics) to show whether a project has or hasn't produced better outcomes than what might have happened anyway. Qualitative evaluation (discussions, interviews, stories) is also needed to understand why something worked or didn't. Together, this information can help you and others to decide whether to continue a project, change it, or stop it altogether.

3. To provide a means for payment

Outcomes-based contracts have seen increasing use in the UK and globally in recent years, particularly through instruments such as impact bonds. These are contracts where partial or full payment is made between a government or donor agency and an external provider (or sometimes, between different levels of government) based on a set of pre-agreed outcomes.

The idea is that by paying for outcomes, rather than specifying particular activities (which is a more typical method of contracting), the outcome payer gives the provider more flexibility to deliver the service in a way that will work best, learning as they go along.

Proponents also argue it gives providers a greater incentive for success and reduces the financial risk to the outcome payer if a project fails. To pay for outcomes, you need to be extra thoughtful about how you set and measure them. After reading this guide, you may wish to read the guide to pricing outcomes.

Once you have an understanding as to why you are measuring outcomes, you can then begin developing an outcomes framework.

What is an outcomes framework?

A robust outcomes framework sets the groundwork for your impact bond project. It needs to define the following:

- the outcomes to be used

- the measures to be applied to each outcome, which show whether an outcome has been achieved or not

- the specific targets to be applied to each measure, that determine the level of achievement at which outcome payments will be made

- when measurement takes place

It is worth noting here that in practice an outcomes contract might include a mix of outcomes and outputs, as it is not always feasible to assess the performance of a service or programme solely on the basis of outcomes (e.g. where outcomes might take a long time to realise or would prove difficult to measure in a reliable way).

For example, outcomes-based projects that aim to support young people into sustained employment, may include payments for outputs such as placement on or completion of a training programme.

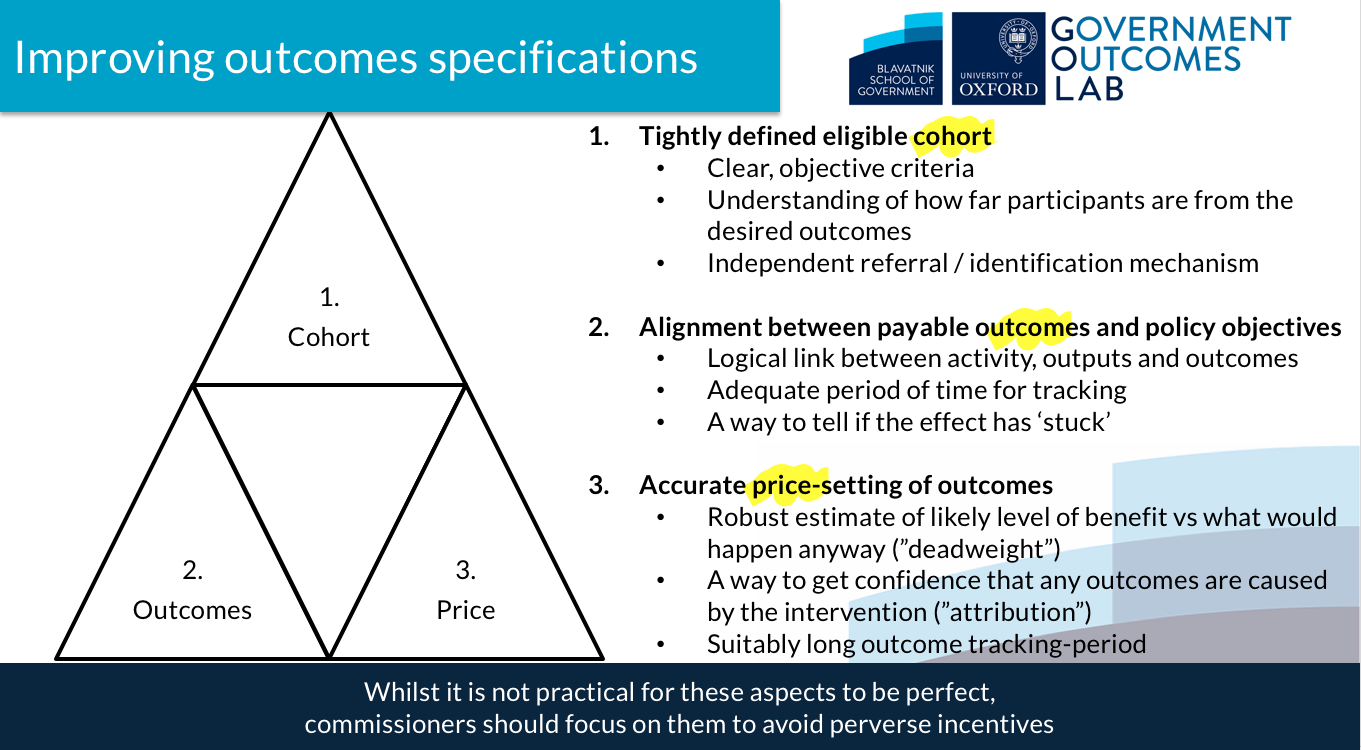

At the GO Lab we have developed a high-level framework that sets out the key components for improving outcomes specifications (see below). The framework highlights that to be effective an outcomes contract needs to be robust on three aspects: the cohort of beneficiaries that the programme will support, the outcomes that the programme aims to achieve, and the price that will be paid for the outcomes.

- outcome is what changes for an individual or group as the result of a service or intervention e.g. improved learning in school, better mental health, etc.

- outcome measure (also termed an indicator) is the specific way the commissioner chooses to determine whether that outcome has been achieved, e.g. a test score. Often this encompasses a single dimension of an outcome.

- outcome target (also termed metric or trigger) is the specific value attached to the measure for the purposes of determining whether satisfactory performance has been achieved. e.g. a test score of 95 out of 100 or improvement of 30 points in a test score over a 5-month period. Under a payment-by-results contract or in an impact bond, these metrics will usually determine whether a payment is made to the provider or investor.

Both measures and targets may relate to what is achieved by and for an individual within the cohort, or to performance across the cohort as a whole.

It is helpful to think about outcomes and measures separately when considering the framework as a whole. It may be possible to define outcomes that fully relate to the policy objective, but measures may not be comprehensive in capturing all facets of an outcome. The fact that an outcome is difficult to measure does not make the outcome invalid, but it may mean it is not appropriate to attach a payment to it (as you might in an outcome-based contract).

It is also advisable to have a fairly settled view of the outcomes and measures to be applied to a contract before starting to assign specific values to them as metrics or triggers.

Some examples of outcomes, measures and targets

| Outcome | The young person is in employment |

|---|---|

| Outcome measure (also termed an indicator) | Confirmation from the employer that the person is employed by them |

| Outcome metric (also termed triggers or targets) |

The young person is in continuous employment of a minimum of 16 hours per week for a defined period or That 20% of the total cohort are in continuous employment for a defined period on average |

| Outcome | Improved emotional wellbeing of young people |

|---|---|

| Outcome measure (also termed an indicator) | An identifiable improvement in young people’s resilience and ability to deal with challenges using the Strengths and Difficulties Questionnaire (SDQ) |

| Outcome metric (also termed triggers or targets) | The young person reduces their total SDQ score by a defined number over a specified period or that there is a mean reduction in the average score across the cohort as a whole |

| Outcome | People are able to manage their long term condition without hospital treatment |

|---|---|

| Outcome measure (also termed an indicator) | A reduction in the number of hospital admissions by people receiving support in relation to the specified condition(s) |

| Outcome metric (also termed triggers or targets) |

A specific reduction (e.g. 1 per person) in the average number of planned or unplanned admissions (or both) across the cohort of those receiving support, compared to the average prior to intervention or The average admissions by a comparison group |

Identifying the right outcomes

With an understanding of the key concepts you will need to start identifying the appropriate outcomes for your project. This chapter starts with a checklist for identifying outcomes. It then goes into more detail about the different types of outcomes there are, so you can consider what might be appropriate for your project.

It is important to note that there are no hard and fast rules about what constitutes the “right” set of outcomes and measures for a particular project or contract. Project teams should expect that the process of developing outcomes will be both iterative and progressive. They should seek to work with all stakeholders (this includes service users) to ensure that outcomes are meaningful for services users and align with the ultimate policy goal.

Stakeholders may have different perspectives on what constitutes a robust and viable outcome measure and it is crucial to negotiate as you go along. In most cases, the task of setting outcomes is likely to proceed incrementally as you develop the business case. It will be informed and influenced by other decisions, particularly pricing outcomes.

A couple of definitions:

Iterative – It is likely that while developing an outcomes framework many outcomes will be discarded and there is an element of trial and error. For example, if there are challenges to do with data collection, the outcome may not work.

Progressive – In the early stages, outcomes do not need to be specified and defined in great detail, but as work progresses they will need a closer definition. Ultimately, they will need to be clearly and unambiguously defined in the contract.

Checklist for identifying outcomes

These are some of the questions you will need to consider when identifying outcomes.

- Can you narrow down to one primary outcome and several secondary outcomes?

- Do the outcomes align to the policy objectives/ overall goals of the project? This includes the social problem that the contract aims to address, as well as the financial benefits it will bring.

- What hard or soft outcomes will you use?

- Do you need proxy outcomes if your outcomes are difficult to measure directly?

- Do you need to set outcomes that show progression or will a binary yes/no be enough?

- Are the outcomes achievable through a social intervention?

- Are they acceptable to all stakeholders?

- Do they reflect the priority of the service users? Has this been tested?

Some (more) working definitions

When thinking about the outcomes that you will include in your contract (and link payment to) it is helpful to think about the distinction between different types of outcomes and the implications of using them.

Primary and secondary outcomes

Primary outcome – this is the single most important outcome from the contract. It is the one that the outcome payer most wants to see positively impacted. It is likely to reflect the most important outcome for the outcome payer in policy terms and/or delivers the greater financial benefit to them.

Secondary outcome – these are the other important outcomes that the outcome funder wishes to see improved. They may capture a different dimension of the programme intent and help to reinforce and sustain the primary outcome. They may capture stakeholder interests that are not reflected in the primary outcome and offer further benefits to the outcome payer. They may also counterbalance perverse incentives.

Limiting and classifying the number of outcomes is easier to manage, as having multiple outcomes make it harder to predict when they will occur. In an outcomes-based contract, including multiple payment outcomes can makes it harder for outcome payers to forecast spending, this is particularly challenging when the outcome funder is a government agency). It can also be harder for service providers (and investors) to predict income and cashflow.

At this point it will be useful to refer to the pricing outcomes guide to understand how outcome measures are linked to payment.

In some contracts, the outcome funder may wish to measure a larger number of outcomes than the norm. In these cases, we recommend defining and specifying outcomes that will be measured but will not be linked to payment. Outcome funders should however take account of the cost and effort required to collect and monitor data on any further outcomes – both to themselves and to providers and investors.

Hard and soft outcomes

Hard outcomes

Hard outcomes can be objectively and independently measured. For example, a child being in or out of care or an adult being employed. They can often be underpinned by a statutory process, such as a child being on a Child Protection Plan – there is no doubt about this as it requires a formal decision by a local authority.

The main advantages are:

- there is little room for disagreement

- easy to measure

- often the measurement data already exists

The main disadvantages are:

- when used in isolation they can give an incomplete picture of the value of the outcome to the individual

- they can bias the measurement towards outcomes that are not significant to the experience of the individual, e.g. incentivising a child staying out of care assumes that they will be better off in the long-run staying in the family

- they may not capture the sustained impact of the service, e.g. a young person may re-engage in education but may drop out after a short period

A binary outcome is a type of hard outcome that has only two states – either an outcome is achieved or it is not. They are used where it is deemed unacceptable for the public sector to pay for outcomes that include negative events. For example, outcome payers may run a project to reduce re-offending, and may wish to track its reduction – but this is not binary, and offences will still have been committed. Outcome payers should consider the political impact of the measurement and whether a binary outcome may be most appropriate.

Soft outcomes

Soft outcomes depend on measurement which is more subjective, e.g. an individual’s self-assessment. They are nearly always measured at the level of the individual or family rather than across the cohort. Soft outcomes are usually set and measured by referring to proprietary measurement methods, such as Outcomes Star family of tools or Strengths and Difficulties Questionnaire (SDQ).

The main advantages are:

- they can indicate progress towards a hard outcome that may take time to achieve

- they can add a richer picture of what is going, compared to using solely a hard outcome which may be misleading on its own

The main disadvantages are:

- The quality of the measurement tool used can vary. There are many hundreds of measures out there for soft outcomes, and selecting the most suitable and robust one is difficult and time-consuming.

- There is increased risk of the measurement being skewed (or even deliberately distorted) by the way the assessment is carried out.

Below are two examples of setting outcomes and how they divide into hard and soft. Both these examples are from our case studies that provide in-depth look into many aspects of the projects in question.

West London Zone

The West London Zone supports disadvantaged young people to flourish in all aspects of their life. They have link workers in local children’s centre, schools and employment agencies to help achieve these outcomes:

Hard outcomes – Increase in school attendance, improved attainment in English and maths

Soft outcomes – improved social and emotional skills, peer relationships and conduct. These are measured through an improvement in SDQ score (strengths and difficulties questionnaire)

Ways to Wellness

The Ways to Wellness SIB, an impact bond contracted by the Newcastle Gateshead Clinical Commissioning Group in the UK, measure improvements in the management of long-term conditions achieved through social prescriptions.

Hard outcomes – reduced hospital admissions, reduced use of outpatient and A&E services

Soft outcomes – improved wellbeing, measured through Triangle Consulting’s Wellbeing Star

Proxy outcomes

As identified earlier in our Designing Robust Outcome's framework, it is important that the outcomes you measure (and incentivise) are closely linked to the policy intent or overall goal of the project. One way to do this is through so-called ‘proxy outcomes’.

Proxy outcomes have been defined by The Centre for Government (US) as an “indirect measure of the desired outcome which is itself strongly correlated to that outcome used when direct measures of the outcome are unobservable and/or unavailable”.

As the name suggests, a proxy outcome acts in place of the actual outcome you are interested in. It is something that tends to go hand-in-hand with the desired outcome – if one is achieved, the other is achieved and vice versa. But beware – correlation does not mean causation so you may end up paying for outcomes which have little to no effect on the actual policy intent of the outcomes-based contract.

Many soft outcomes and measures are used as a form of proxy on the basis that they will indicate achievement of another outcome that is difficult to measure.

There are many examples of proxy measures and how they can help when outcomes are not easily measured, but there are challenges too.

- Children leaving care: the primary outcome is that children leave care and have secured stable and independent living. The proxy outcome can be increased resilience. This is challenging to measure exactly as it is subjective (a ‘soft’ measure), but it can be one of many indicators that the child is ready to leave care.

- Long-term health conditions: the primary outcome is that people are managing their long-term health conditions well. The proxy outcome is a reduced hospital admission. The downside to this is that reduced hospital admissions may be due to other conditions having higher priority, or external factors such as longer waiting times. It does not necessarily indicate a positive action

- People who are unemployed: the primary outcome is that people will find secure employment. The proxy outcome is a reduction in benefits. However, it may be the case that people are no longer be claiming benefits without finding work.

- People who have been in prison: the primary outcome is that there is a reduction in reoffending. The proxy outcome may be reduced conviction rates. However, many offences may go undetected so it is likely to underestimate both the scale and potential severity of offences committed.

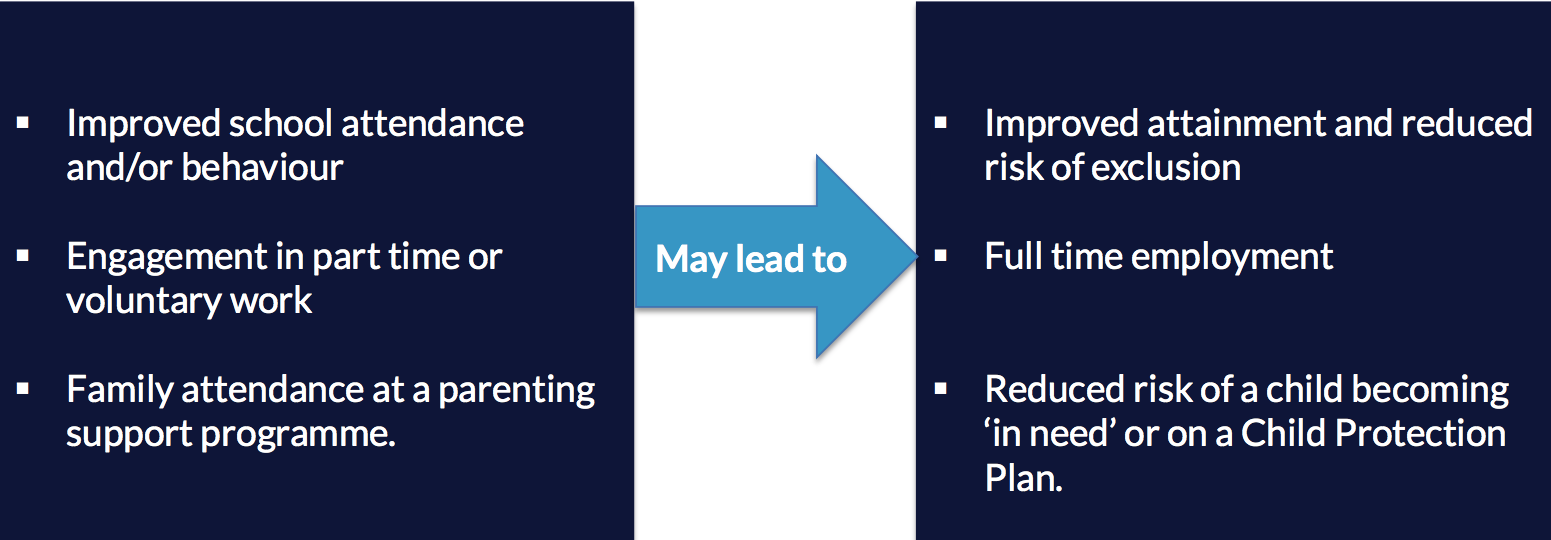

Lead/progression outcomes

Lead or progression outcomes show movement towards a later outcome. This may be necessary if the primary outcome take a long time to measure and commissioners want reassurance in the meantime that the outcome is likely to be achieved.

For example, for children with highly complex needs in foster care, a lead measure might be better attendance at school, where the evidence indicates this increases the likelihood of the child maintaining a stable foster care outcome. There is also value and impact for the individual and the service from that outcome in its own right. The boxes below offer more examples of how progression outcomes can lead to other greater outcomes.

Lead or progression measures are also used when there is a low likelihood of achieving the primary outcome for the entire cohort, because people have different levels of need. Therefore, some people many require a different starting point and the acknowledgement that are meeting some intermediary outcomes along the way.

In an outcomes-based contract, payment against these lead or progression measures may help to mitigate the risk of “parking”. This is where the provider chooses not to work with certain individuals who are too far from achieving the outcome. However, this can considerably complicate the design of the payment mechanism.

The main disadvantage of lead or progression measures is that providers may not invest in moving the cohort towards the primary outcomes if the payment terms provide significant reward at this earlier stage. The level of payment attached to such outcomes, and the number that can be claimed across a cohort, needs to be set with care.Measuring outcomes

Checklist for measuring outcomes

There are many of the questions the commissioner will need to answer when measuring outcomes:

- What data will you use to measure outcomes? Is there data available from other sources to measure outcomes? eg. internal performance management, school attendance registers

- If data is not available, does there need to be significant investment for new collection processes and systems?

- who will be responsible for collecting the data and do they have the capacity to do so?

- If someone else is collecting data, does the data need to be independently checked and validated?

- Will outcomes will be measured for the individual or across the cohort?

As measuring the outcomes is about knowing what the impact of the project is, it is important to consider evaluation at this point. Refer to our technical guide to understand what you need to consider for your evaluation.

Data collection

In terms of measuring outcomes, the method of data collection is crucial. You will need to find out whether the data required is already collected for other purposes. Assumptions should not be made about the availability of data from other parties or the ability of those parties to collect data on your behalf. If certain data is needed to measure an outcome, its availability, the legality and practicality of collection should always be confirmed.

For service providers, setting up additional data collection and performance monitoring systems can be expensive. The costs and administrative burden of this should be weighed when deciding on the type of data that will be collected and used to measure outcomes. It is helpful to consider how you can use the performance monitoring systems to track performance and inform decisions across the organisations (not just on the outcomes-based project).

The table below sets out the types of data and some of the pros and cons of each approach.| Data type | Pros | Cons |

|---|---|---|

| Administrative data |

|

|

| Primary data |

|

|

Cohort and individual measurement

The success of the contract in achieving better outcomes (and triggering payment where appropriate) can be measured in two different ways: the individual (or family unit), and the cohort.

If you are designing an outcomes-based contract that makes payment contingent on achievement of outcomes, choosing individual or cohort measures will impact on the payment structure. For example, many SIB contracts in the UK have used a rate card linked to individual outcomes because this can be cheaper to manage, and can be simpler than a cohort level measurement approach. To understand more about this, look at the pricing outcomes guide.

The outcome funder needs to consider which is most appropriate for the characteristics of the cohort and this is highlighted below.

Individual outcome measurement

Works best with a homogenous cohort. When all members of the cohort experience the same or similar adverse outcomes the outcome target can be set at an individual level, e.g. avoiding entry to care for a cohort who are all at risk of entering care.

A comparison group is not required. As the individual is assessed and this directly relates to the outcomes there is no need to set up a comparison group. Changed or improved outcomes can be specified on a rate card that shows the payment amounts per individual when an outcome is achieved.

Does not allow for what would have happened anyway (the ‘deadweight’). If there is no comparison group, the outcomes achieved are likely to include some that would have happened anyway, without the intervention. This may be because of other positive things happening in that individual’s life, or due to natural statistical variation. This can only be avoided if the outcome payer has a reliable way to estimate what would have happened anyway, known as the deadweight. If this is not done, there is significant risk that the provider/investor will be rewarded for achieving too little impact. The payment level should be adjusted downwards accordingly.

Cohort outcome measurement

Works best if adverse outcomes vary considerably. It is harder to set standard measures of success at the individual level if the cohort is made up of different individuals, e.g. the frequency and severity of offending can vary widely and a rate card could not be used.

Usually requires a comparison group – this is needed so the outcomes achieved through the contracted intervention can be compared against a group up did not receive a service. This is known as a counterfactual and is there to check that the outcome would not have happened without the intervention. In an outcomes-based contract, this can be used to assess whether payments should be made.

Does not require separate calculation of what would have happened anyway (“deadweight”) – any deadweight will be reflected in the measurement against the comparator group, and only performance over and above the comparator group will be rewarded.

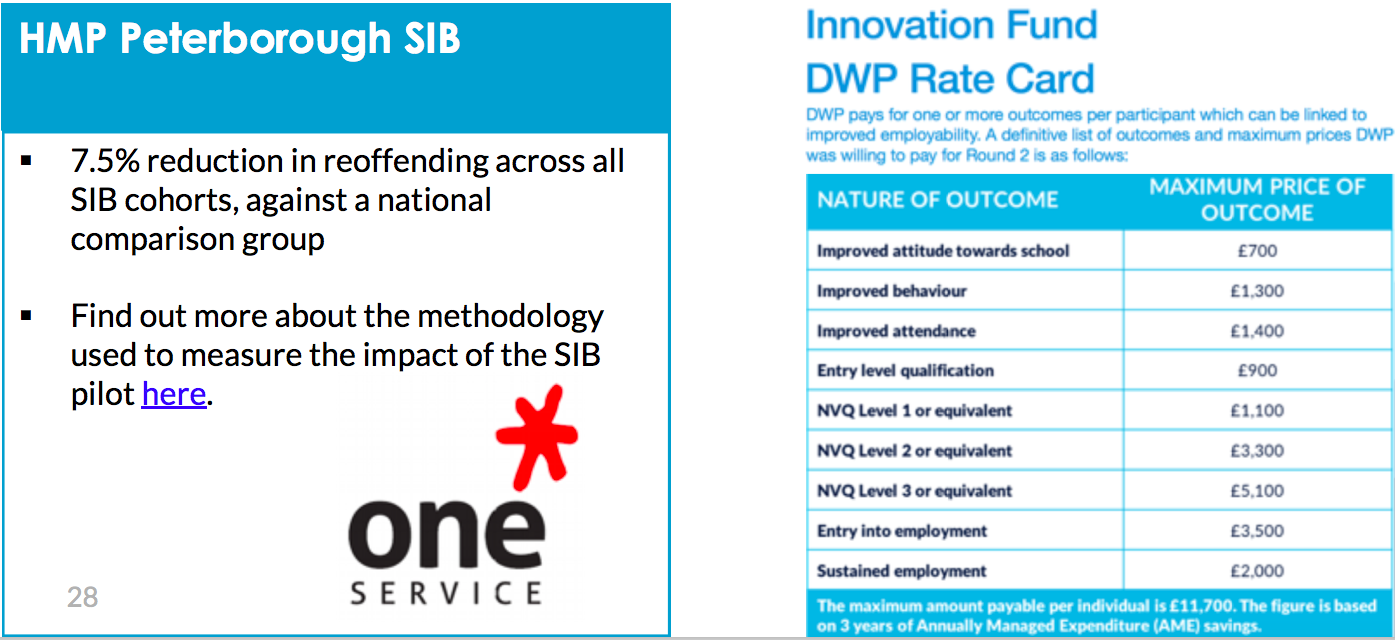

Example cohort vs individual

The One Service impact bond in Peterborough, UK used a cohort measurement. You can find out more about the methodology used to measure the impact of the project here.

The Innovation Fund SIBs funded by the Department for Work and Pensions in the UK used an individual measurement. This was done through a rate card whereby the price was set for each outcome. You can read more about rate cards in the pricing outcomes guide.

In the international development space, the Educate Girls DIB used cohort-wide measurement. The Quality Education India (QEI) DIB built on the learning from the Educate Girls experience and decided to opt for payment per beneficiary model.

The creation of an education rate card was one of the stated objectives of the QEI DIB. This set out the costs of delivering specific outcomes, and can be used by government and funders to make informed policy and spending decisions. This is seen as a way to streamline and make more effective the process of paying for education outcomes.

Setting the right outcome target

This chapter sets out a checklist for setting outcome metrics, before going into greater detail about the considerations that need to be made.

Please note that this should not be viewed in isolation as when outcomes are set for an outcomes-based contract, there will need to be decisions made about pricing outcomes, and how you evaluate the project.

Checklist for setting outcome targets

There are several considerations that need to be made when setting outcome metrics:

- Is there evidence about the level of performance that can be reasonably achieved?

- Would stretch targets be appropriate?

- Have providers and social investors been involved in the decision-making process?

- Do the outcome measures avoid or mitigate perverse incentives?

- Have you considered any uncontrolled external factors that have the potential to impact on the outcome measurement?

Achievability and stretch targets

Where an outcome funder is uncertain whether the performance level they are seeking is reasonable and attainable, they should test their thinking with service providers (and investors, if it is an impact bond contract). There may be a question as to whether there should be stretch targets for providers under a contract. This is only advisable if they can be achieved. If they are uncertain whether a stretch target is achievable, they can test their thinking with providers and investors during the development and implementation stages.

In a contract that incentivises skills attainment by young people, there may be a metric (and payment) relating to:

- the young person engaging in skills training (a lead/progression metric)

- the achievement of a first education qualification (the primary outcome metric)

- the achievement of a second education qualification (a stretch metric).

In a contract that rewards the achievement of employment there may be similar metrics relating to the person:

- engaging with the programme (a lead/progression metric)

- being in full-time employment for 13 weeks (the primary outcome metric); and

- sustaining full-time employment for 26 weeks (a stretch metric, looking at the outcome being sustained)

Involving all stakeholders

This applies especially to outcomes-based contracts where outcomes form all or part of the payment. It could be argued that ensuring the outcomes framework is acceptable to all parties is the single most important consideration. If the outcome payer is prepared to pay for the achievement of an outcome, and providers and investors are comfortable to have success measured by the achievement of the same outcome, a successful contract is likely. Conversely, if one or more parties are not happy with the proposed outcome measure or the performance level required to achieve payment, it will be very difficult to conclude a contract successfully.

Outcome payers should consult other parties while designing the outcomes specifications and should do so prior to any competitive process (where a public contract is to be awarded). This will judge how acceptable the proposed framework is. It will also be useful to benefit from providers’ (and investors') previous experience of similar contracts and what outcome measures and metrics were used then.

A 2019 evaluation of four DIB pilots funded by DFID (forthcoming) highlights that:

Outcome metrics and targets work best when returns to investors and outcome funders, and respective incentives, are aligned. Developing outcome metrics and rate cards that are understood by all stakeholders and linked to other metrics within the sector/country can increase the value of the learning generated, and also facilitate the broader DIB market and/or potential transition to a SIB. It is noted that there can be a tension between using a robust model and using a less robust model that is aligned with measures used by others in the sector.

Gaming and perverse incentives

When agreeing outcome metrics, it is important to identify ways to avoid or mitigate perverse incentives. It is not only outcomes-based contracts that are prone to these (although they may be especially vulnerable) – to some extent, any effort to measure outcomes is at risk of this. Perverse incentives encourage contract stakeholders to behave in a way that is detrimental to contractual goals even if some outcome metrics improve. The result of perverse incentives can be negative consequences for service users. They can occur for all parties of a contract, not just providers or investors.

There are two main forms of perverse incentives, these will be discussed below with examples and ways to mitigate them.

1. Exerting influence on who is eligible for the service - ‘cherry picking’

Sometimes providers, investors or intermediaries may select beneficiaries that are more likely to achieve the expected outcomes and leave outside the cohort the most challenging cases. This is known as ‘cherry picking’.

An example of this is when an outcome is chosen that seeks to achieve an absence or reduction of referrals to a statutory agency. The perverse incentive is to simply not refer people even if they fit the criteria for statutory support. This will make it look like there has been a reduction even though it is not in the best interests of the beneficiaries.

To mitigate this there should be a collective decision-making process involving both the outcome funder and service provider, or a neutral referral party/mechanism. This provides the opportunity for all parties to make and agree decisions that are in the best interests of the beneficiary and avoid ‘cherry picking’.

2. Choosing who to work with in the cohort - ‘creaming’ and ‘parking’

In some instances, providers may choose particular people from the cohort who are already nearer to the desired outcome and therefore are easier to support – this is known as ‘creaming’. The opposite of this can also happen whereby providers neglect people who are less likely to achieve positive outcomes – this is known as ‘parking’.

This may occur when an outcome target does not consider the varying levels across the cohort. For example, an outcome measure may be ‘an employment support programme requires 50 number of people to be in employment in 12 months’. The cohort may be made up of people who have very different needs. More challenging cases such as those with complex needs and who are longer term unemployed may be ‘parked’ and those who are likely to find it easier to get a job may be supported more. To mitigate this the outcome metric could be structured so that the start point of the individual is recognised and they are rewarded for the progress made. (See lead/progression outcomes.) This will mean that variation in the cohort is accounted for.

It may also occur when there is a simplistic binary outcome that results in no support being given after a certain milestone – a ‘cliff edge’. For example, a homelessness project may seek to settle 20 many homeless people in 12 months. If people fail to achieve this outcome they are no longer of value to the provider and are ‘parked’. To mitigate this, the target should reward success at small and regular intervals, or offer bonus payments. The amount of homeless people settled could be measured at 12 months, 18 months and 24 months and bonus payments could be set to encourage people to settle in accommodation.

You will need to consider how you will mitigate against perverse incentives so that the outcomes are in the best interests of the beneficiaries. If you refer to the Designing a Robust Outcomes framework and make sure you do the three key points well, you should avoid perverse incentives.

Mitigating the impact of uncontrolled external factors

When setting outcome measures it is fundamental to avoid measures that can be unduly influenced by factors outside the control of the provider organisation (and other key stakeholders). It may be impossible to identify social outcomes that are entirely impervious to wider changes in social policy, or changes in demographics or labour market change. However, it is wise to avoid outcomes that can easily be under or over achieved due to external factors. These are:

- possible changes in government policy, e.g. an outcome relating to educational attainment might be affected by changes in the national curriculum

- planned service changes, e.g. an outcome from an intervention focused on children in need may be affected by the reorganisation of the public sector body responsible for supporting vulnerable

- changes in local practice, e.g. plans by another body to pay for a similar intervention at the same cohort in the same area may undermine the validity of a comparison group

Whilst these external factors may not be under control, it is important to determine the potential impact they could have when designing an outcomes-based programme. It is wise to take into account the likely influencing factors in the metrics, they could be both positive or negative. It is also wise to have agreements with other practitioners so they all operate in a way that enables the outcomes to be achieved.

In some circumstances, outcome funders may accept obligations under the contract to manage the impact of these factors, but this is often not the case. It may be preferable to include the monitoring and reporting of these impacts as part of the contract management process, with the expectation that the commissioner will take steps to mitigate or avoid where possible.

Examples of setting outcomes and targets

If you look at our case studies you will find examples of outcomes measures and targets from impact bond projects developed across the world to tackle a range of social issues, from youth unemployment, to lack of access to education and high quality health services and facilities, homelessness, and poverty.

What does ‘good’ look like?

As there is no ‘correct’ or ‘set’ way to create outcomes, measures and targets, you may be questioning what good looks like. Having understood the considerations that have been outlined in this guide you are equipped to write a robust outcomes framework.

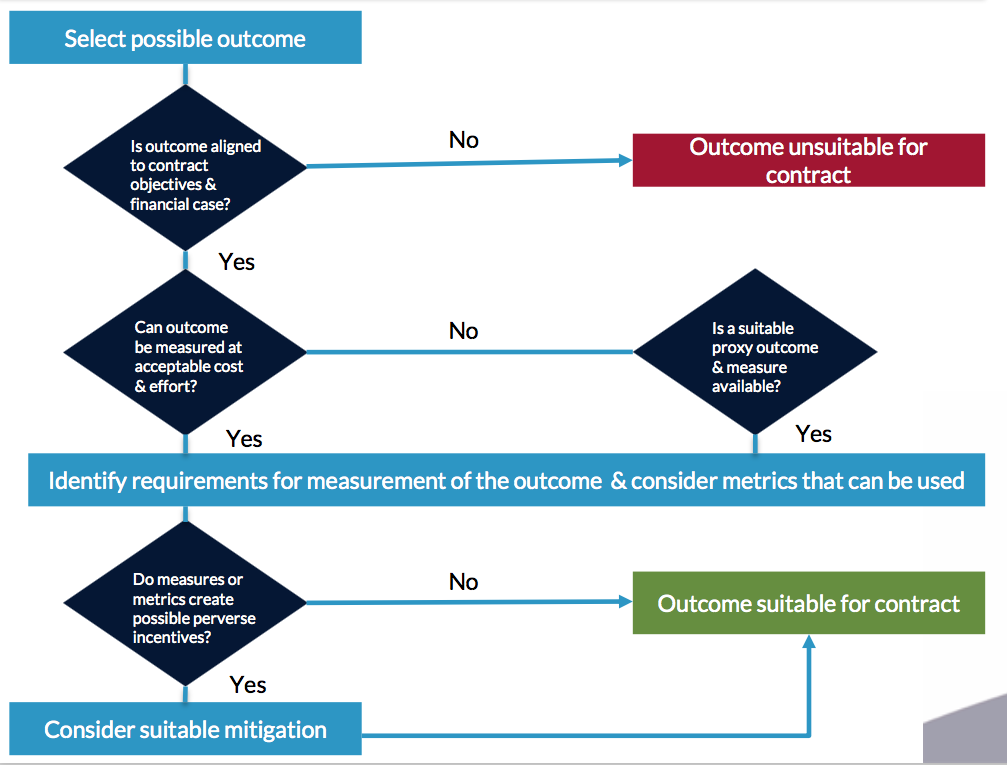

You can test your outcomes using the simple decision tree below. Once you have identified the outcomes and their appropriate measures you can check the outcomes definition section of the impact bond lifecycle to see what best practice will look like.

West London Zone

As part of the Collective Impact Bond Steering Group process, WLZ worked with key stakeholders in the area to analyse some of the challenges that children and young people growing up in the are face. The goal was to understand where to focus efforts, and what outcomes we should be aiming to achieve over the long-term. Many areas were identified at each age stage.

Because of the inherent complexity of the problems they are seeking to address, collective impact initiatives tend to try to achieve multiple outcomes, that are in turn underpinned by many indicators.

However, as payment is directly linked to outcomes in the WLZ funding model, outcomes had to be identified that can be reliably measured, monitored and attributable to the programme. In practice this required in the early stages of the project ongoing reviewing and refining of the outcomes framework.

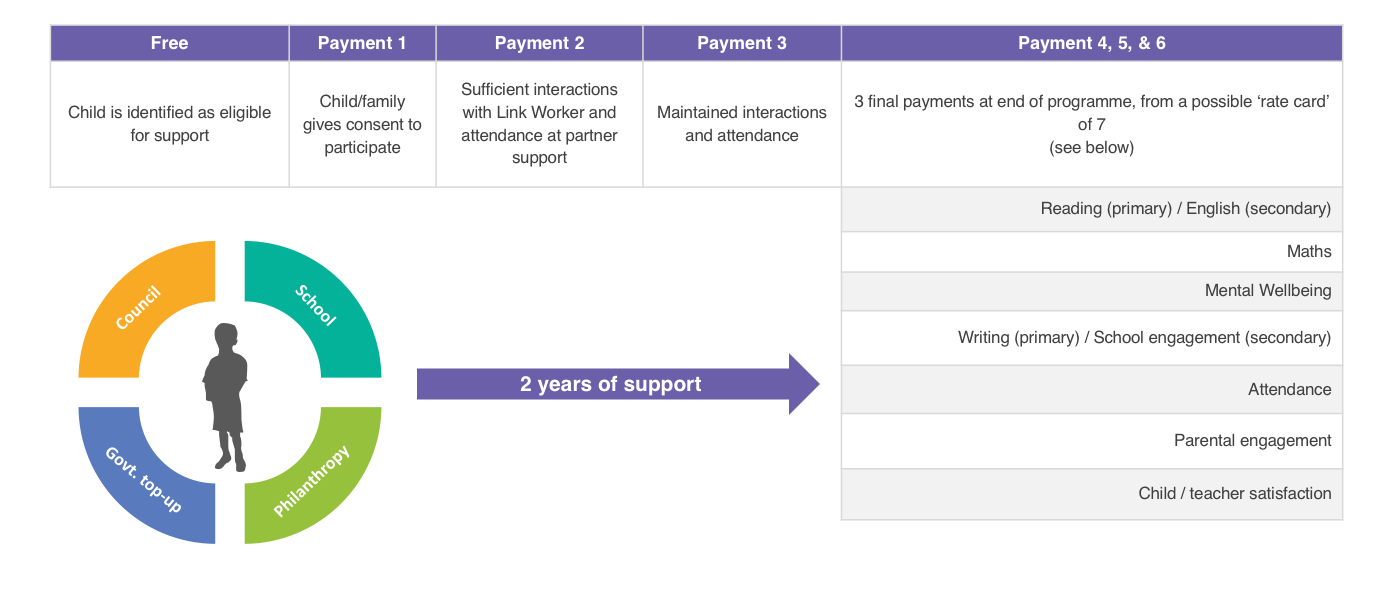

The framework underwent multiple iterations, and now covers four outcome areas - positive relationships, mental wellbeing, progress at school, and confidence/aspiration - with 7 specific measures (related to those 4 areas) which can release payment at the end of each child's two year programme. A previous version of the framework included eight outcome areas across three domains - wellbeing, learning and character.

Outcomes measurement is underpinned by a robust approach to data collection and analysis. To ensure a robust monitoring of a young person's progress, WZL collects data at regular time points from a range of sources:- WLZ data collection survey (composite of self-report measures on a wide range of health, wellbeing, character and non-academic progress measures; the survey measures 19 additional risks in addition to school data) - Annually in October

- School administrative data (demographic information, attendance, attainment) - Annually in October

- Delivery partner data (weekly attendance, pre- and post- programme outcome measurement) - Weekly

- Link worker engagement notes (qualitative reporting on young people's engagement and progress) - Daily

While WLZ tracks their impact across a broad set of long-term outcomes, payment for outcomes is linked to specific measures across the two years of support provided to each child on the programme, as outlined in the diagram below.

You can view more examples of outcomes frameworks on the case studies as part of our website.

Further resources

There is a wealth of resources available for practitioners interested in developing and implementing outcomes-based approaches.

Big Society Capital Outcomes Matrix

Social Finance (2015) Technical Guide: Designing outcome metrics

Strengthening Nonprofits: A Capacity Builder’s Resource Library (2010) Measuring Outcomes

Acknowledgements

This guide was originally put together by Grace Young, Policy and Communications Officer, and updated for an international audience by Andreea Anastasiu, Senior Policy Engagement Officer. We are grateful to our partners who reviewed and contributed comments to this guide, and in particular to Neil Stanworth (ATQ Consultants & GO Lab Fellow of Practice) for his work on previous iterations.

We have designated this and other guides as ‘beta’ documents and we warmly welcome any comments or suggestions. We will regularly review and update our material in response. Please email your feedback to golab@bsg.ox.ac.uk.